Video robot data may seem easy to record, but typical videos are nearly impossible for robots in real life to learn from. While a standard tripod-mounted or cinematic video might look clear to a human, it often falls short in data robotics training.

Robots in real life rely on detailed interaction cues, consistent viewpoints, and complete action sequences. For a robot to interact in real-life scenarios, seeing an action isn’t enough. It needs to observe actions from an agent’s perspective.

This is where egocentric data collection becomes crucial. Egocentric data captures interactions from a first-person perspective, aligned with an agent performing a task. Instead of observing actions from the outside, data robotics models can learn directly from how tasks are seen and executed in real life.

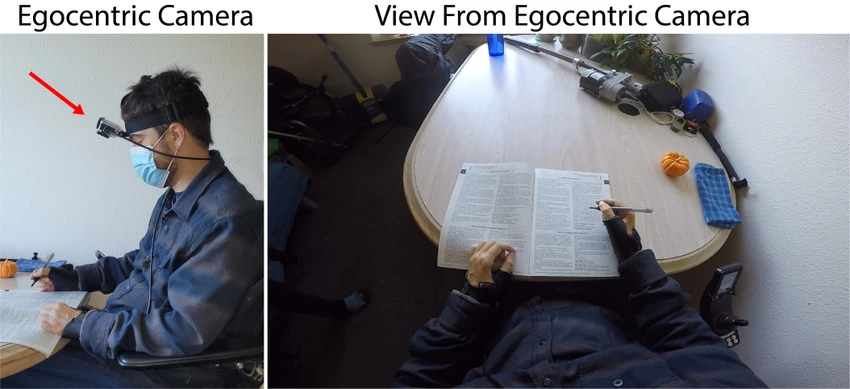

Egocentric Data Vs. Normal Data (Source)

In other words, egocentric data serves as structured sensor data. By using wearable or onboard cameras that move in sync with the agent, this type of robot data captures the exact viewpoint shifts, natural occlusions, and hand-object interactions in a task.

These characteristics make egocentric data fundamentally different from standard videos and far more valuable for training robots in real life. In this article, we’ll explore how egocentric robot data works and why it plays a critical role in training robots in real life for various environments. Let’s get started!

Egocentric data collection is not defined solely by the perspective it’s captured from. When it comes to data robotics, what matters more is how precisely that perspective is captured and maintained throughout an interaction.

Even small inconsistencies, such as shifts in framing and brief occlusions, can reduce interaction clarity and weaken the learning signals. This level of precision is especially important for robots in real life operating in different situations, where even small perception errors can lead to incorrect or unsafe actions.

The reliance on precise capture introduces a few critical failure points. Some of the most important factors related to egocentric robot data are as follows:

High-quality egocentric data is defined by stability, visibility, and consistency throughout the interaction. These properties determine whether a recorded task can be reliably understood and learned by a model.

For example, let’s consider a simple task like picking up a mug, moving it, and placing it on a table.

The viewpoint needs to remain head-aligned and stable throughout the interaction. Without this alignment, the camera no longer reflects how the action is actually performed.

When the camera moves naturally with the participant and keeps the interaction area centered, models can better interpret the sequence of actions. Sudden shifts, poor alignment, or drifting angles introduce ambiguity and weaken spatial understanding.

An Example of How Egocentric Data is Collected for Robots in Real Life (Source)

Hand–object visibility is equally important. Hands convey intent, while objects represent task state. When both are fully visible during manipulation, models can learn how actions unfold step by step. If visibility breaks during critical moments, the structure of the task becomes harder to recover.

Motion quality also plays a key role. Natural, continuous head and hand movements provide timing and behavioral cues that support action recognition and manipulation learning. Abrupt or inconsistent motion disrupts these signals and weakens temporal coherence.

Across recordings, consistency matters more than volume. If the same task is captured with varying framing, motion, or visibility across sessions, adding more data won’t resolve the issue. In egocentric data collection and data robotics, precision and repeatability outweigh the total number of recorded hours.

Interestingly, the quality of egocentric data is shaped long before the recording begins. Small decisions about hardware and camera placement can make the difference between clear, usable interaction signals and footage that falls short for training. It all starts with the recording device itself and how the camera is positioned on the participant.

Next, let’s walk through the key setup considerations that ensure robots in real life receive high-quality, reliable training data.

The recording device directly affects how well actions and interactions are preserved. To capture a true first-person perspective, the hardware has to mimic the human visual field as closely as possible.

For this reason, head-mounted devices, such as smartphones mounted at eye level, provide the most reliable setup for egocentric data collection. As the camera moves naturally with the participant’s head, it maintains the natural alignment between visual input and physical action.

Other setups come with limitations. Handheld recordings create unstable motion, as the participant controls both the task and the camera. Meanwhile, chest-mounted cameras shift the viewpoint lower, often reducing hand visibility and altering how objects appear during manipulation. In both cases, the disconnect between action and visual capture weakens the learning signal.

A Look at the Setup for Recording Egocentric Data and Robot Data

For best results, the camera should be positioned at the forehead or eye level with a slight downward angle of around 45 degrees. This placement keeps both hands and manipulated objects consistently visible throughout the task and helps preserve complete interaction sequences.

Video quality plays a direct role in how usable egocentric data is for training robots in real life. Even with the right hardware, poorly chosen video recording settings can weaken interaction signals and reduce learning effectiveness.

Resolution needs to be high enough to preserve fine interaction details. Settings below 1080p often miss hand poses, object edges, and contact points that are essential for manipulation learning.

Frame rate also affects clarity. While 30 FPS captures general actions, higher frame rates, such as 60 FPS, better preserve fast hand movements. This becomes especially important for tasks that involve precise or rapid manipulation.

Another important parameter is the orientation. The orientation type influences spatial understanding. Landscape recording preserves the full horizontal workspace and maintains context around the interaction area. On the other hand, vertical footage narrows the field of view and often limits consistent visibility of hands and objects during key task phases.

Beyond this, focus and motion stability have to remain consistent throughout the recording. Blur, sudden shifts, or focus loss break interaction continuity and weaken action modeling.

Clear visibility of hands and objects is central to egocentric data quality. In first-person recordings, hands convey intent, while objects represent task state. When hand visibility breaks, the meaning of the action becomes harder to infer.

A Frame from a Real-Life Example of Egocentric Data (Source)

Egocentric datasets should account for both one-hand and two-hand tasks, as interaction patterns differ between them. Recordings need to clearly capture the role and coordination of each hand during manipulation. Occlusions should be minimized, as clothing, sleeves, or body positioning can block critical moments of interaction and reduce clarity.

Head movement also plays an important role. Natural, steady motion preserves realistic interaction flow, while abrupt or jerky movement introduces instability into the recording. Embodied AI systems depend on smooth, continuous sequences rather than isolated visual moments.

Egocentric robot data is most useful when it captures the full flow of an interaction. Tasks typically move from picking up an object to holding it, manipulating it, and reaching a clear end state.

Recording the entire sequence allows models to understand how actions begin, transition, and conclude. When intermediate steps are missing, it becomes difficult to determine where one action ends and the next begins.

Consider a cloth folding sequence. If a recording shows only the garment being picked up but not the completed fold, the model never observes the final state that it is expected to learn.

The same issue appears in tasks involving multiple objects handled in sequence, where skipping even a single step breaks the logical flow of the interaction and reduces dataset reliability.

However, once an interaction reaches a clear and complete end state, objects moving out of view don’t affect learning. By that point, the model has already observed the full interaction sequence needed for robot data understanding.

So far, we have covered hardware setup, camera positioning, video quality, and interaction visibility. Each of these factors directly influences how effectively robots in real life learn from recorded tasks. Next, let’s discuss how to ensure these recordings remain reliable and usable throughout the training process.

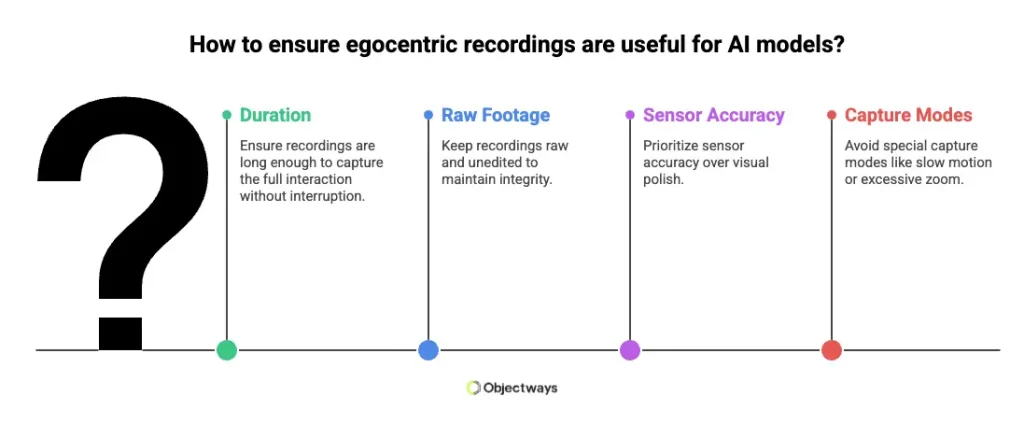

Egocentric recordings need to be long enough to capture the full interaction without interruption. The exact duration depends on the task, but most usable sequences fall between 20 seconds and 15 minutes. What matters isn’t the length itself, but whether the interaction is shown clearly from start to completion.

How footage is preserved is equally vital. Keeping recordings raw and unedited maintains the integrity of the captured interaction. Editing or post-processing can unintentionally remove subtle motion and timing cues that models rely on during learning.

How to Ensure Egocentric Recordings Are Useful

In egocentric data collection, sensor-level accuracy is more crucial than visual polish. Minor lighting changes or natural camera movement are acceptable if the interaction remains visible. However, enhancements such as heavy light correction, artificial stabilization, or contouring can distort motion and depth information.

Special capture modes are also not recommended. Slow motion alters natural timing, and excessive zoom restricts visibility of hands and objects. Reliable action modeling depends on complete, unaltered, and consistently framed recordings.

Even when you follow best practices, egocentric data collection can still come with real-world challenges. Recording outside of controlled lab conditions means things don’t always go as planned, and small issues can affect how clearly interactions are captured for robots in real life.

Here are the common challenges that you may face when capturing egocentric robot data:

In any computer vision application, the quality of the training robot data decides the model’s performance more than its architecture. In egocentric applications, models trained on incomplete or inconsistent examples will struggle to replicate actions.

Issues such as visual noise or temporal gaps can affect representation learning, trajectory prediction, and evaluation outcomes. High-performing embodied AI systems rely on structured data capture and consistent validation.

That is why many teams choose to work with experienced partners who understand the technical and operational demands of collecting high-quality data at scale.

At Objectways, egocentric data is developed through an end-to-end workflow that transforms first-person recordings into model-ready datasets. This approach reduces common risks and supports scalable deployment.

For teams planning to build high-quality embodied AI datasets to train robots in real life or working on egocentric applications, reach out to us.

Egocentric robot data plays a key role in training robots in real life for different environments. The quality of data directly influences how effectively models learn, adapt, and perform under dynamic conditions. Strong data foundations improve training stability and lead to more reliable, consistent outcomes.

If you’re working on an embodied AI project, connecting with experienced teams can make a meaningful difference. Objectways supports structured egocentric robot data development, helping transform first-person recordings into model-ready datasets.

Yes, robots in real life are widely used in factories, warehouses, hospitals, agriculture, and homes. They perform specific tasks using sensors, AI, and structured robot data to operate safely.