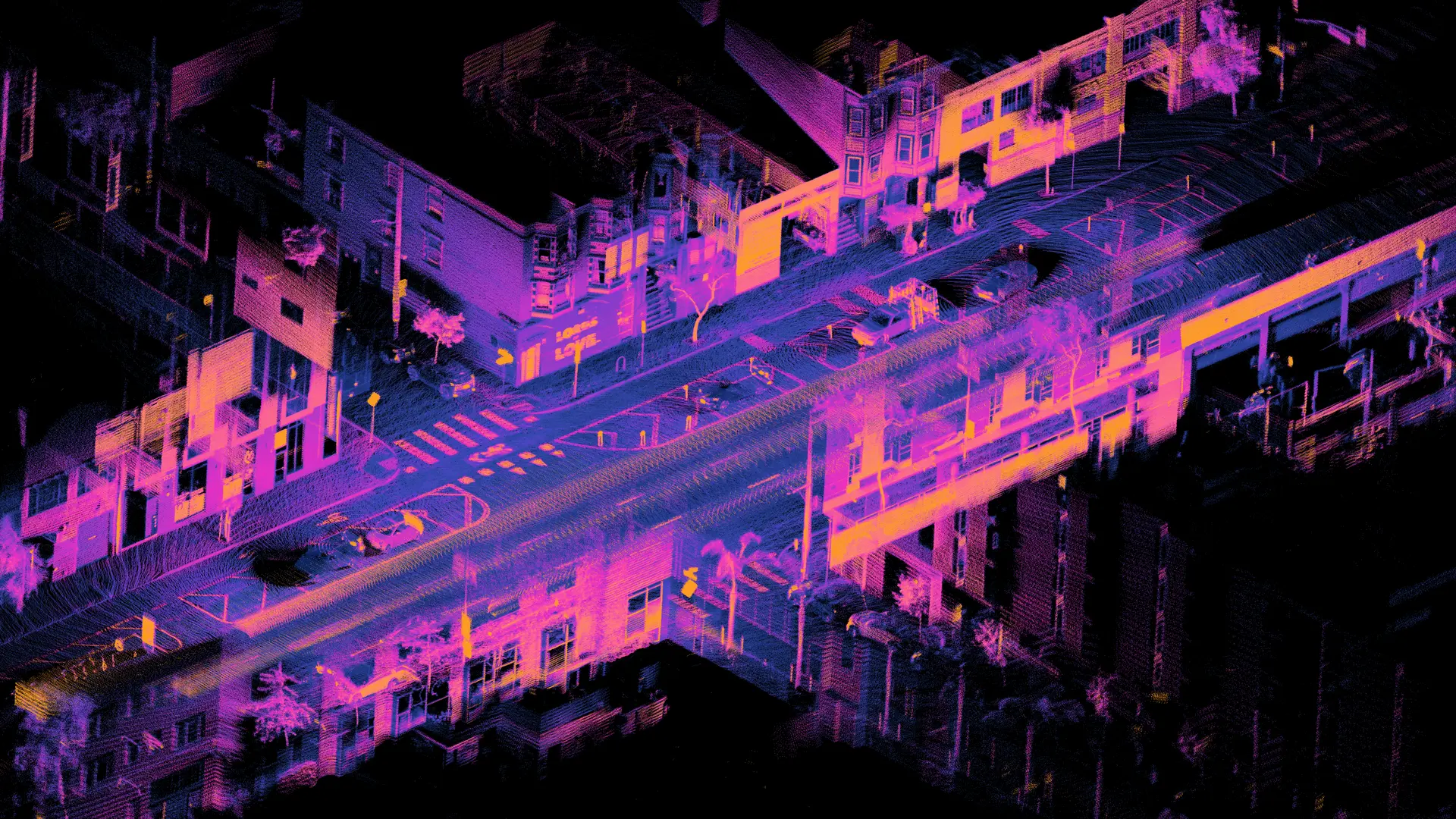

Learn what 3D point cloud annotation is, how it powers robotics perception, and why spatial AI depends on accurate 3D training data.

See how imitation learning helps robots learn from human demonstrations and why data quality is essential for building reliable physical AI systems

Robotics in manufacturing depends on more than hardware. See how data annotation powers perception, automation, and reliable robot performance within factories.

See how multimodal data like RGB, depth, audio, and motion data help physical AI systems understand environments and improve real-world decision-making.

Teleoperation helps turn human actions into training data for robots. Learn how real-world teleoperation data and pipelines improve robot learning and autonomy.

Explore how motion capture data and egocentric data are shaping the future of robotics, enabling smarter, more adaptive robot training.

Explore how robot data collection methods power physical intelligence, including egocentric data, teleoperation data, RGB-D data, UMI data, and motion capture data.

Learn why egocentric data is essential for training robots in real life, how it differs from normal video standards, and what it takes to capture...