When you guide someone’s hands while they’re learning a new skill, like shaping clay, they’re basically learning by mirroring your own movements in real time. A direct demonstration helps them understand what to do and how it feels to do it.

Teleoperation in robotics works similarly. It involves a human directly controlling a robot and guiding it step by step. These actions can be recorded as demonstrations and used to train a model, which can then be deployed on the robot to perform the task more independently.

This hands-on control allows the robot to capture precise movements and interactions, turning human expertise into data. For example, in a recent demonstration in China, a humanoid robot in Hangzhou was controlled by a human operator located over 1,200 kilometers away in Beijing. The operator guided the robot in real time to perform tasks like handing over a towel and passing pieces of fruit, which required precise and coordinated movements.

Despite the distance, the interaction felt seamless, with every adjustment in grip and motion happening almost instantly. This showed how teleoperation can enable fine, human-like manipulation across locations while also generating high-quality data for training robotic systems.

Similar systems are being developed and deployed across industries, from manufacturing and logistics to healthcare and service robotics. In fact, teleoperation in robotics is slowly and steadily growing all over the world.

The teleoperation systems market is projected to reach about $2.3 billion by 2030. It’s increasingly becoming an essential part of cutting-edge robotics, where learning is guided by experience gathered through human-controlled actions.

Let’s take a closer look at how teleoperation turns human actions into training data and drives robot autonomy.

Teleoperation in robotics refers to controlling a robot from a distance, often in real time, with the robot following human input.

The person behind the controls is called a teleoperator. They guide movements through camera feeds and sensor data, reacting to what they see as the task happens.

The teleoperated robot executes these commands step by step, performing actions that can range from picking up simple objects to handling complex tools.

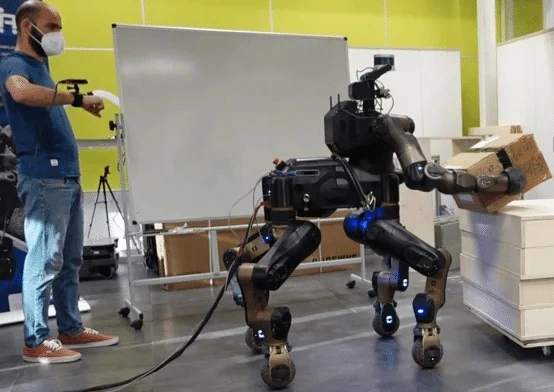

An Example of Teleoperation in Robotics Being Used to Perform a Task (Source)

Something to keep in mind is that the environments these robots operate in are rarely stable. Real-world settings are constantly changing. Objects shift, positions vary, and conditions evolve from moment to moment. Even simple tasks can play out differently each time, making consistent execution tricky.

Teleoperation makes it possible for a human to stay in the loop and guide the robot in real time. The teleoperator can make small adjustments based on what they see, helping the robot handle variations that would be difficult to pre-program.

At the same time, these interactions can be recorded as demonstrations. Over time, they can be built into a dataset that captures how tasks are actually performed in dynamic, real-world conditions. This data can then be used to train models that enable the robot to perform similar tasks more independently in the future.

When it comes to teleoperation, the experience largely depends on how control is delivered. In other words, the interface between the operator and the robot defines how information flows, how fast the robot responds, and how natural the interaction feels.

Here are some of the most common interfaces used to operate a robot remotely:

Next, let’s discuss why teleoperated data collection is crucial.

Teleoperated data is valuable because it captures how tasks are actually performed. This can even include small adjustments that shape the final outcome.

For example, consider an operator guiding a robot to pick up an object and place it elsewhere. An operator might adjust a robot’s grip, correct the angle, and respond to subtle changes along the way. These details may seem minor, but they significantly affect how the task is completed, and each step can be recorded as it happens.

Over time, these recordings build into a collection of examples that reflect real-world task execution. This data is then used for robotics training, often through imitation learning or related approaches, enabling robots to replicate actions or make decisions based on similar situations.

So, how is teleoperated data actually turned into training data? When a human controls a robot, every observation the robot receives and every action it takes can be recorded together. These paired observations and actions form examples that models can learn from, letting the robot understand how to respond in similar situations.

Here’s an overview of the steps involved:

While teleoperation is useful for collecting demonstrations, it does have some limitations. What gets recorded depends on what the operator can see and control, which means some details about the environment or physical interaction may be missed.

To make up for this, additional types of data are often used alongside teleoperated demonstrations. Two important ones are egocentric data and multi-sensor data.

Egocentric data captures the task from the robot’s or operator’s point of view. This keeps the visual input aligned with the actions being performed, so the model learns from the same perspective it will use during execution.

Multi-sensor data adds more detail beyond vision. In addition to camera input, systems can record depth, force, and motion signals. This can include motion capture (MoCap) data and gripper-specific signals, which give a clearer picture of how movements and interactions happen.

Each signal captures a different part of the task. Cameras show what the robot sees, depth adds spatial context, and force sensors reflect how the robot interacts with objects. Motion and gripper data further describe how the action is carried out.

When combined with teleoperated demonstrations, these signals create a more complete dataset. This makes it easier for models to understand different tasks and handle variations more reliably.

Robots are becoming easier to spot nowadays, whether it’s at the airport or cleaning floors in a shopping mall. Many of these systems rely on teleoperation to handle tasks that still need human input and control.

Next, let’s take a look at where teleoperation is used in real-world settings.

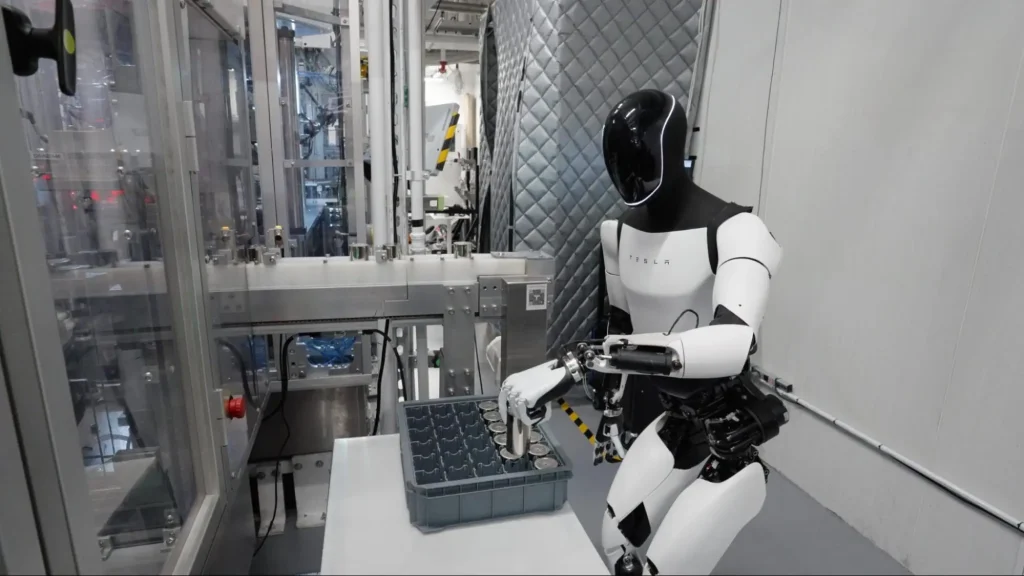

Some early versions of Tesla’s robots relied heavily on teleoperation, with operators stepping in to guide actions and correct mistakes in real time. For example, their Optimus robot can be used to sort objects on a factory line. The robot’s movements appear controlled and deliberate, but much of that comes from human guidance behind the scenes.

Tesla’s Optimus Learns Manufacturing Tasks Through Teleoperation (Source)

The influence of teleoperation is growing in manufacturing, where tasks are rarely identical. Objects aren’t always placed the same way, materials can behave differently, and even simple actions often require small adjustments.

Teleoperated robots offer a clear advantage in these settings. Operators can guide the robot through these variations in real time, handling edge cases and making small decisions along the way. Each session captures not just what is done, but how those decisions are made.

Similarly, in a busy warehouse, operations need to keep moving even when something unexpected happens. A forklift may need to navigate a tight corner, or a path may suddenly be blocked by misplaced inventory.

Situations like these are often difficult for fully autonomous systems to handle on their own. When the system reaches its limit, a remote operator can step in and guide the vehicle using live video and control systems.

This approach is already being used with equipment like forklifts and yard trucks, where control can shift between autonomy and a human as needed, keeping operations running smoothly.

Teleoperation of a Forklift in a Warehouse Environment (Source)

What makes these situations unique is how they are handled. Operators don’t follow fixed steps. Instead, they respond to cluttered layouts, moving obstacles, and tight spaces in real time. These decisions keep operations running smoothly, even when the environment behaves unpredictably.

Another crucial use case of teleoperated robots is in healthcare. Surgical procedures leave very little room for error. A few millimeters can make a difference, especially when working around delicate tissue.

In such settings, teleoperation robots extend the surgeon’s control rather than replace it. The surgeon operates from a console, while robotic arms carry out each movement with a level of steadiness that is difficult to maintain by hand. This type of surgery can even be performed remotely, with the doctor and patient separated by a long distance.

What makes this setup stand out is the control it offers. The robot doesn’t act on its own. Every motion comes directly from the surgeon, translated in real time into precise actions inside the patient’s body.

A well-known example is the da Vinci Surgical System, used in minimally invasive procedures across areas like urology, gynecology, and general surgery. It enables surgeons to work through smaller incisions while maintaining a high level of precision.

The da Vinci Surgical System Enables Precise Teleoperated Surgery (Source)

While systems like this are designed for direct human control, they also generate valuable data. Each movement made by the surgeon, along with the robot’s response, can be recorded during procedures or training.

This data can be used to capture expert techniques, including motion patterns and tool control. In research settings, it is used to train models that assist with specific tasks, such as improving precision or guiding movements.

However, in healthcare, this process is carefully controlled. Data is typically collected in simulations or supervised environments, with a strong focus on safety and reliability.

Here are some key challenges to consider when working with teleoperated data:

Handling these challenges becomes seamless when you partner with experts who understand teleoperated data end-to-end. That’s exactly what Objectways delivers.

At Objectways, we understand the need for high-quality, well-structured robotics data to build reliable and scalable systems. To support your robotics initiatives, we offer end-to-end data solutions, from teleoperation workflows to egocentric and multimodal data capture.

Robot learning is moving toward systems that learn through interaction and adapt to real environments.

There is a strong focus on embodied AI, where learning comes from doing tasks in the real world, rather than from static data. This makes interaction-driven data much more critical.

There is also a growing need to scale human demonstrations. Just a few pieces of data aren’t enough to capture the variability of real-world conditions or train models to generalize effectively. Teleoperation is becoming a more structured way to capture expert behavior across many tasks.

Another trend is the use of both synthetic and real-world data. Synthetic data helps with scale, while real data brings in real-world variation. Using both together leads to better training results.

All of this is pushing robots to become more capable and adaptable. Learning continues to improve through real interactions and better data, bringing systems closer to reliable performance in real environments.

Teleoperation is redefining how robots learn from real-world actions. It connects human input with machine learning and brings real context into training. Each interaction adds depth to the data, making it easier for robots to handle tasks outside controlled environments.

Real progress depends on the data behind these systems. Teams that invest in strong, scalable data pipelines can train models more effectively and handle real-world variation with greater confidence. Better data leads to more consistent performance and fewer gaps during deployment.

If you’re building a robotics system and are facing challenges around data, we can help. Get in touch with our team to see how Objectways can support your data pipeline.

Teleoperation refers to controlling a robot or machine from a distance. A human sends commands, often in real time, and the robot executes them using feedback from cameras and sensors.