Factory floors are getting smarter. Robots are now assembling components, catching defects, moving materials, and working beside human teams at a scale that didn’t exist a few years ago.

Specifically, advanced robotics in manufacturing is shifting toward systems that respond to variation and make decisions in real time. This is happening across industries, from robotics in automotive manufacturing to aerospace, electronics, and consumer goods.

What’s driving this shift isn’t just hardware. The robots making it possible are increasingly software-driven, using AI and machine learning to interpret their environment and act on it.

Consider a welding robot. Beyond precise movement, it needs to identify alignment points at millimeter accuracy while considering shifting parts, reflective surfaces, heat distortion, and variable lighting. What a human can handle instinctively, a robot has to be explicitly taught.

Robotics in manufacturing can be used for use cases like welding. (Source: Pexels)

Teaching a robot to handle that starts with data. AI in robotics draws on visual, spatial, and motion inputs from cameras, LiDAR, and depth sensors across the floor, but raw data alone doesn’t produce understanding.

Annotated data is what transforms sensor input into information that robots can be trained to understand, enabling robots to detect components, read spatial relationships, and execute tasks reliably when conditions change.

Let’s explore how robotics is used in manufacturing today and why data annotation is the foundation that makes automation and robotics in manufacturing actually work.

Before we dive in, let’s take a step back and understand what robotics in manufacturing actually means today, because the answer looks different from a decade ago.

Traditionally, automation and robotics in manufacturing were built around fixed, repetitive workflows. Industrial robots were programmed to perform the same motion repeatedly in highly controlled environments where every part, position, and movement was predictable.

Today, manufacturing environments are far more dynamic. Smart automation and robotics in manufacturing are increasingly sensor-driven and adaptive, with systems that interpret their environment, adjust to variation, and improve over time.

The evolution of robotic hardware also reflects this. Articulated robotic arms handle precision tasks like welding and assembly. Collaborative robots, or cobots, work alongside human operators on flexible lines. Autonomous mobile robots, or AMRs, navigate factory floors without fixed tracks or human guidance.

A Collaborative Robot Arm Can Be Used in Manufacturing Environments (Source: Pexels)

The common thread across all of them is data. Unlike traditional automation, cutting-edge robotics applications in manufacturing learn and adapt from data, which is what makes them capable of keeping up with the complexity of real production floors.

Another interesting question is: why are manufacturers moving toward more advanced robotics systems now?

One of the biggest drivers is labor pressure. Manufacturers across industries are facing ongoing labor shortages, rising operational costs, and increasing difficulty filling repetitive or physically demanding roles. At the same time, production demands continue to rise.

Robots help close that gap, handling high-volume, repetitive, or hazardous tasks that are increasingly difficult to staff consistently. Consistency is another major factor. At the production scale, even small variations in quality add up quickly. Robotics in manufacturing delivers the same precision repeatedly, reducing defects, minimizing waste, and keeping rejection rates low across thousands of cycles.

There’s also the simple advantage of uptime. Robots don’t fatigue, lose focus, or need shift changes. That makes 24/7 operation achievable.

But perhaps the most important shift in how manufacturers think about automation and robotics in manufacturing is the move away from replacement toward augmentation. Next-gen robots handle tasks that are dangerous, repetitive, or physically demanding, freeing human workers to focus on judgment-driven work such as quality oversight, process improvement, and exception handling. The result is a production floor where humans and robots each do what they’re best at.

Next, let’s walk through some common robotics applications in manufacturing. While industrial robots were once limited to repetitive assembly line tasks, robotics in manufacturing now spans everything from precision welding and quality inspection to warehouse logistics and autonomous material movement.

An Example of Robots Working With Humans (Source: Pexels)

Here’s an overview of where robotics is making the biggest impact on the production floor:

For a robot to perform reliably on a production floor, it first needs to understand its environment. That’s trickier than it sounds.

Smart manufacturing robots are equipped with an array of perception tools to do this. Cameras capture visual data across the production line, while depth sensors measure spatial relationships and distances between objects.

Force sensors, meanwhile, detect resistance and pressure during physical tasks like assembly or insertion. Together, these systems generate enormous volumes of raw data in real time.

Raw data alone, however, doesn’t directly translate into understanding. AI models are integrated into robotic systems to process and interpret that data, but these models need to be trained before they can make sense of what they’re seeing. They don’t inherently know what a defect looks like, where a component should be positioned, or how to differentiate one part from another on a fast-moving production line.

That training depends on annotated data. When sensor data is labeled, whether that means identifying objects in an image, marking spatial boundaries, or flagging anomalies in a visual feed, it gives AI models the context they need to learn.

The more accurate and representative the annotated data is, the more reliably the robot performs when conditions change on the floor. That’s why data annotation isn’t a back-end technical detail. It’s a core part of what makes robotic perception work well.

Robotic data annotation is the process of labeling raw sensor data so that AI models can learn to interpret it and act on it. It covers a wide range of techniques, depending on what type of data is being labeled and what the robot needs to learn from it.

Here’s a glimpse of what that actually looks like across different data types:

Teams annotating robotic data for manufacturing environments often face the issue of data annotation being far more context-dependent than it is in other AI applications.

Production floors aren’t controlled environments. Lighting changes throughout the day, parts arrive in different orientations, and no two facilities are laid out the same way.

A defect that looks one way in an automotive plant looks completely different in an electronics or food production setting. That variability means annotation can’t be standardized or reused across contexts. It has to be specific to the environment, the product, and the task, and it has to stay accurate as those conditions evolve.

The challenge grows when you factor in how much data manufacturing robots actually generate. These systems draw from multiple sources at once, visual feeds, depth readings, force sensor data, and motion inputs, and all of it needs to be aligned and annotated together to be useful. On an active production line running around the clock, that data volume is substantial.

At that scale, even small inconsistencies in labeling translate into real performance problems on the floor. For manufacturers serious about getting robotics right, annotation quality isn’t a back-end concern. It’s a strategic one.

The gap between a robotics pilot and a full production deployment often comes down to one thing: data quality.

At Objectways, our team of over 2,200 annotation specialists across the US and India understands that better than most. We bring hands-on expertise to some of the most complex data challenges in AI, including robotics in manufacturing.

We work closely with robotics teams to understand the environment, the task, and the variability they’re dealing with, and we build data collection and annotation workflows around that reality.

We handle the full range of data types that manufacturing robots depend on, from computer vision and object detection to egocentric video, teleoperation data, gripper data, and motion capture. Whether you need general-purpose labeling or highly specialized annotation for a specific production environment, our team has the experience to deliver data that holds up on the floor, not just in a test setting.

Great robotics deployments start with the right data partner. (Source: Pexels)

We’re also SOC 2 Type 2 and ISO 27001 certified, so teams working with sensitive production data can trust that it’s in safe hands.

If your team is working to move from pilot to production, or looking to improve the reliability of an existing robotics deployment, we’d love to help. Reach out, and let’s talk about getting your robots production-ready.

The direction manufacturing robotics is heading is clear. Systems that don’t just execute instructions, but reason about their environment, improve as they operate, and handle complexity.

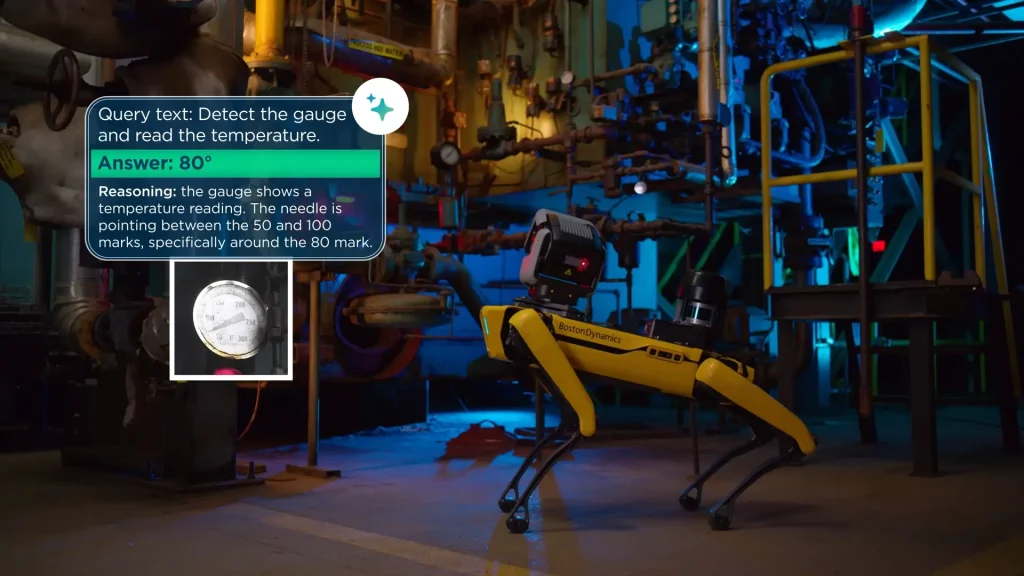

What’s less obvious is what’s holding that future back. Boston Dynamics recently equipped its Spot robot with Google DeepMind’s Gemini Robotics reasoning model, enabling it to autonomously inspect facilities, identify hazards, and read complex gauges.

Spot autonomously detecting and reading a temperature gauge in an industrial facility. (Source)

But even at that level of capability, data is the limiting factor. Carolina Parada, Head of Robotics at Google DeepMind, said it plainly: “There is lots of visual information on the web about how to pick up a pen. If we had enough data with touch information, we could easily learn it, but there is not a lot of data with touch sensing on the internet.”

That dynamic plays out across manufacturing robotics more broadly. Multimodal data is becoming the standard for next-generation systems, but how well it’s annotated determines how far those systems can actually go.

Manufacturers investing in data infrastructure now are building a long-term edge. The robots of the next five years will learn continuously from their environments. The teams that have already built the data foundation will be the ones best positioned to take advantage of that.

Robotics in manufacturing isn’t a question of if or when. The technology is here, and it’s being deployed across automotive, electronics, aerospace, and consumer goods at a scale that continues to grow.

The real question now is how well those robots actually perform once they’re on the floor. And that comes down to data.

On one hand, hardware defines what a robot is capable of. On the other hand, data annotation determines how consistently it delivers on that capability. A robot that can perceive its environment but can’t reliably interpret what it’s seeing will always fall short in production. It’s the quality of the training data, specifically how accurately it’s been labeled, that turns a capable robot into a reliable one.

For manufacturers serious about getting robotics right, the data foundation isn’t an afterthought. It’s where the work starts.

Robots are used across the production process for tasks like assembly, welding, quality inspection, material handling, and packaging, handling high-volume, precision work more consistently than manual labor.